OpenAI Keynote: Apps inside ChatGPT, agents, and the future of design

Apps inside ChatGPT, agent-focused solutions, and a new emphasis on voice, video, and photography.

I mentioned the other day that OpenAI keynotes have been among the most interesting and are breaking new ground in technology. Today I bring some highlights from the 2025 keynote and what this may mean for the products and services we design.

Apps inside ChatGPT

After some experiments, ChatGPT will finally be able to call apps within the conversation so you can take actions without leaving the interface. In everyday use, this could be helpful for travel planning, house hunting, or even restaurant reservations. These are just a few cases; many others are ready to jump into the conversational context.

Is this the same as Operator?

No. Operator was an "early access" feature. Despite some e-commerce tests, it focused on autonomous agents navigating an internal browser, using existing service websites — often not prepared for AI agents. On top of that, any required authentication raised privacy and security concerns, as OpenAI did not guarantee data confidentiality.

With apps in ChatGPT, integrations move into the conversation context, and authentication happens outside the agent's browser, through a more secure and practical process. Instead of the agent going to Booking to search and return results, the results arrive natively in the conversation, in real time and fluidly.

Shared examples

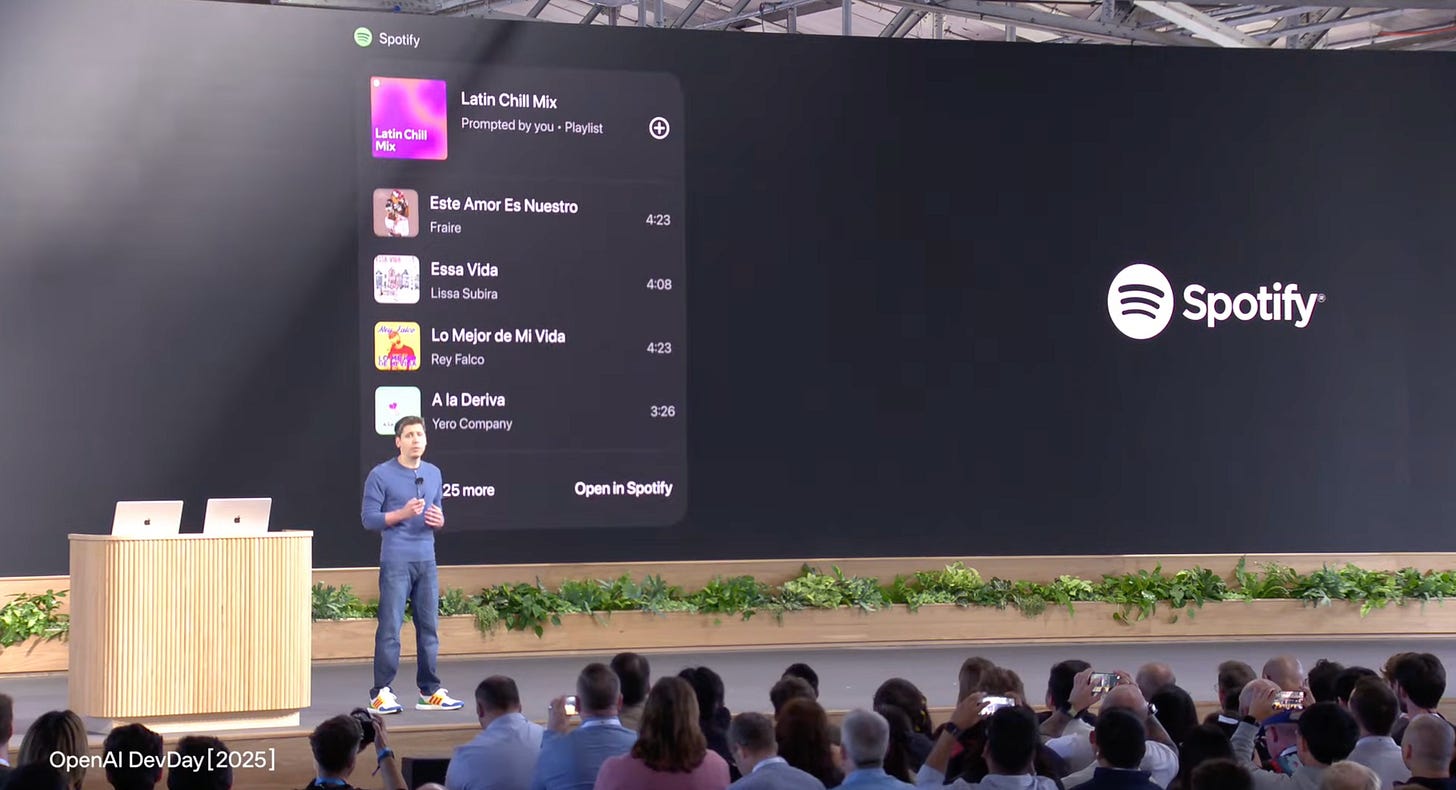

These apps will be maintained by the companies themselves, significantly improving the experience compared to agents crawling regular websites. Already announced partners include Booking.com, OpenTable, Uber, Spotify, among others:

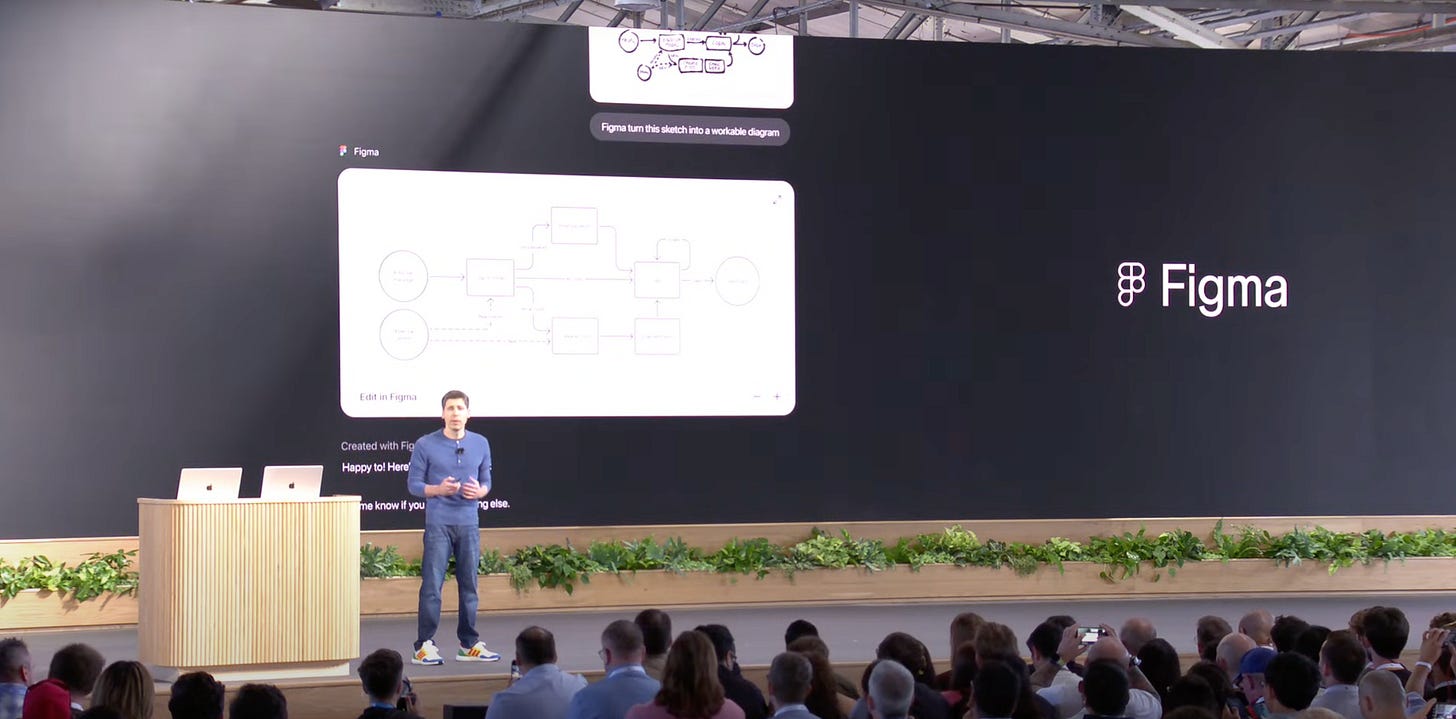

Imagine sketching a diagram and calling Figma inside ChatGPT to turn it into a functional diagram, created directly in FigJam, so you can continue working there.

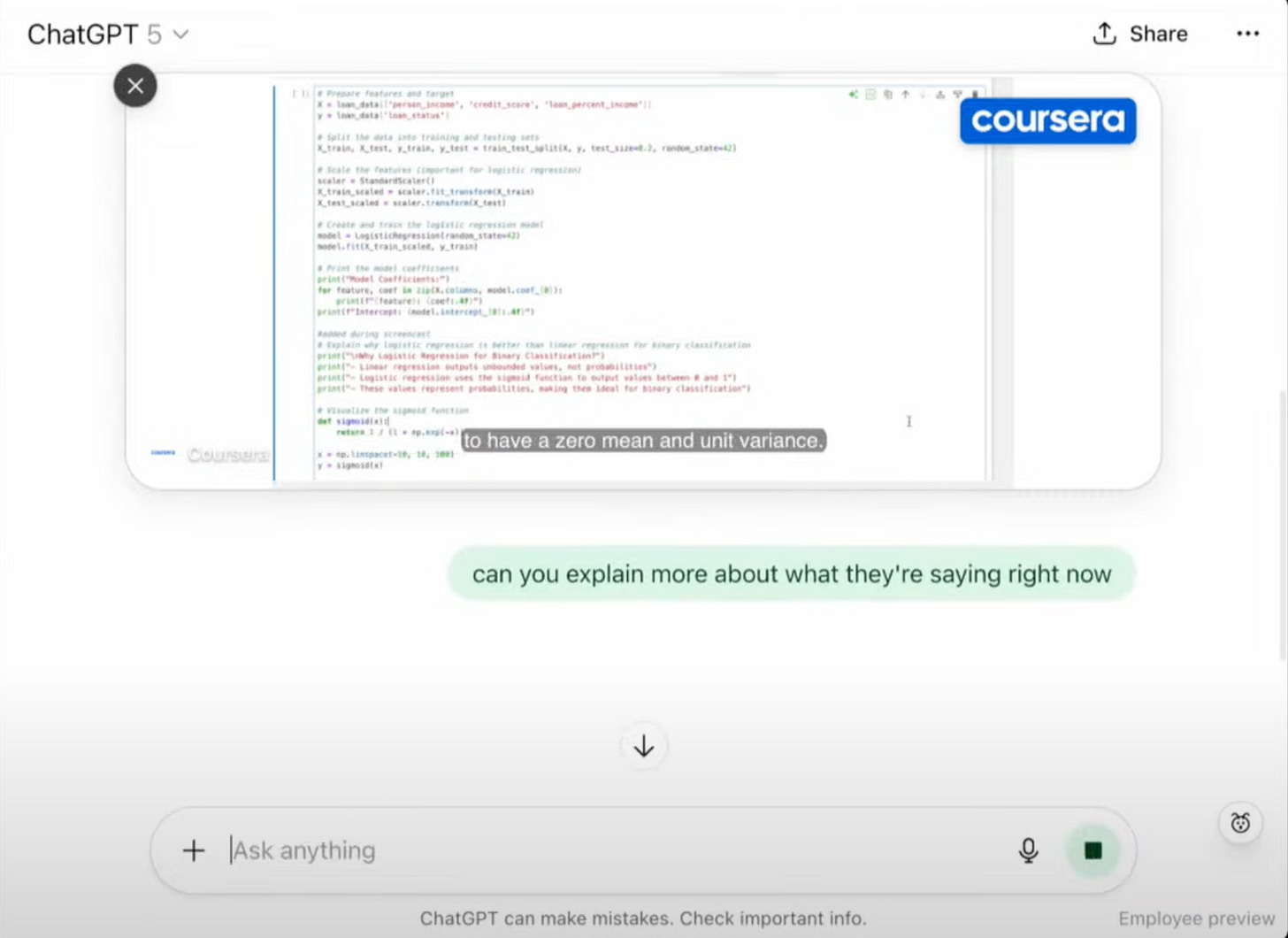

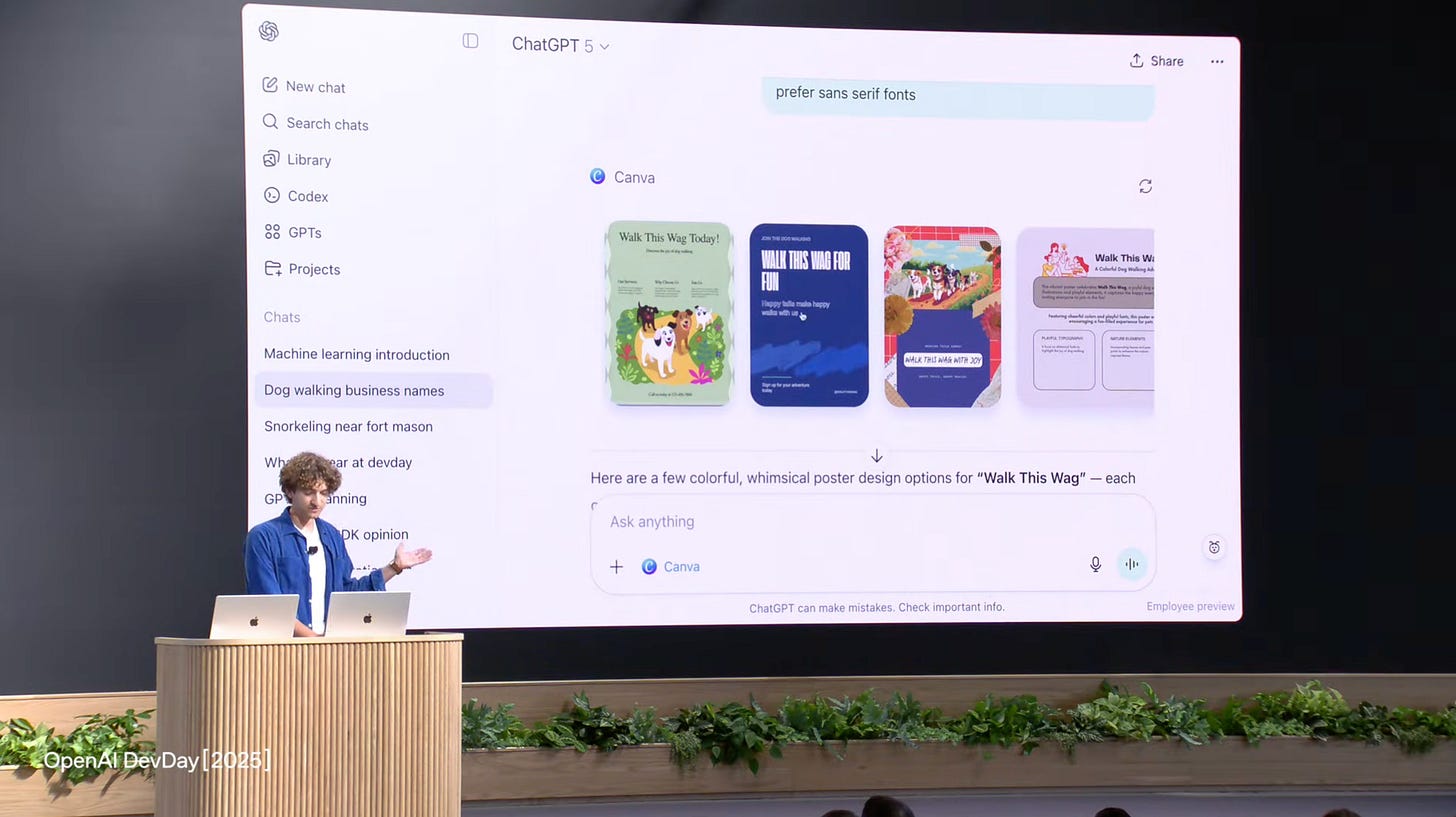

Other examples included Coursera, which can bring courses into the ChatGPT interface and allow in-context questions at a specific video moment, and Canva, now also with an embedded experience inside ChatGPT.

Outros exemplos incluiram a coursera que pode trazer cursos para a interfcace do chatGPT e a funcionalidade de fazer perguntas em contexto ao momento do video, assim como o canva que agora tem também ele uma experiência embebida na app do chatGPT.

Building agents with the Agent Kit

Agent builder

The Agent Builder is a visual canvas for rapidly prototyping agents with logical steps. It makes it easier to test flows and publish agents with less friction.

Take a look at the example video shared by OpenAI:

ChatKit

ChatKit allows embedding the chat interface, connecting conversational components to agents that execute tasks, creating native experiences with visual elements and tools within the dialogue itself.

New Evals

OpenAI has been investing in Evals to assess agent quality. The discussion around how to measure agent experiences is ongoing, but OpenAI has shown a promising path, including automatic prompt optimization.

Agents + connectors

Connectors are coming to enrich ChatGPT usage, with secure connections to apps like Google Drive and GitHub. This enables searching files, creating content directly at the destination, and referencing materials in the conversation.

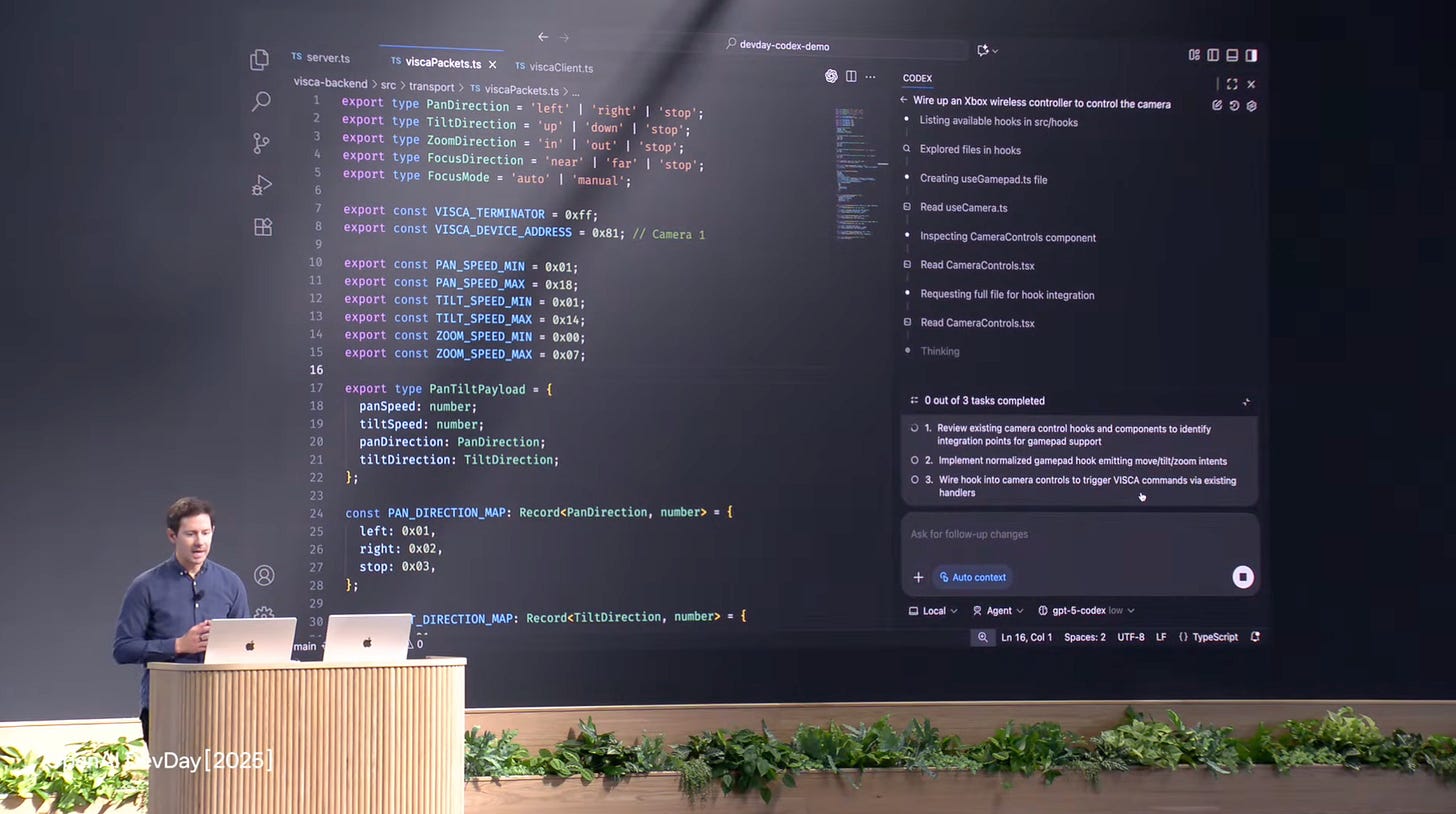

New Codex

The new Codex brings improvements for navigating repositories, editing files, running commands, and executing tests. For designers, perhaps the simplest option is to use Cursor or the VS Code add-on.

(These past weeks I've been trying to build an app using only Codex — I'll share the result later!)

Voice as the primary form of interaction in the future?

Sam Altman mentioned he believes voice will be the primary way of interacting with AI. The team has been working on models to reduce real-time costs. This could transform many experiences and services as we know them.

Sora news

The availability of Sora 2 on the OpenAI API was demonstrated, along with advances in image/video and audio generation (ambient sound and voices).

Another highlight was the Mattel example: from a sketch, it was possible to generate a video to visualize the final product.

What does this mean for Design?

These updates bring new challenges and opportunities for Product and Design.

It is crucial to think about services for the "agentic era", including integrations with ChatGPT.

Designers need to understand how agents work, their capabilities, and adapt their working framework to this reality.

Conversational will gain weight due to technological pressure, but it is essential to balance product decisions with real usability outside the tech bubble.

Measuring agent quality becomes increasingly important; designers should dive into metrics and qualitative evaluation of these experiences.

Image and video generation capabilities are getting better and better, offering new ways to make ideas and concepts tangible.

One last snack before closing

OpenAI has been releasing support materials for this new era of agents. I'd highlight the practical booklet for building agents:

https://cdn.openai.com/business-guides-and-resources/a-practical-guide-to-building-agents.pdf

Any doubts? Leave them in the comments.